Case Study - Redefining Nutrition Tracking with AI

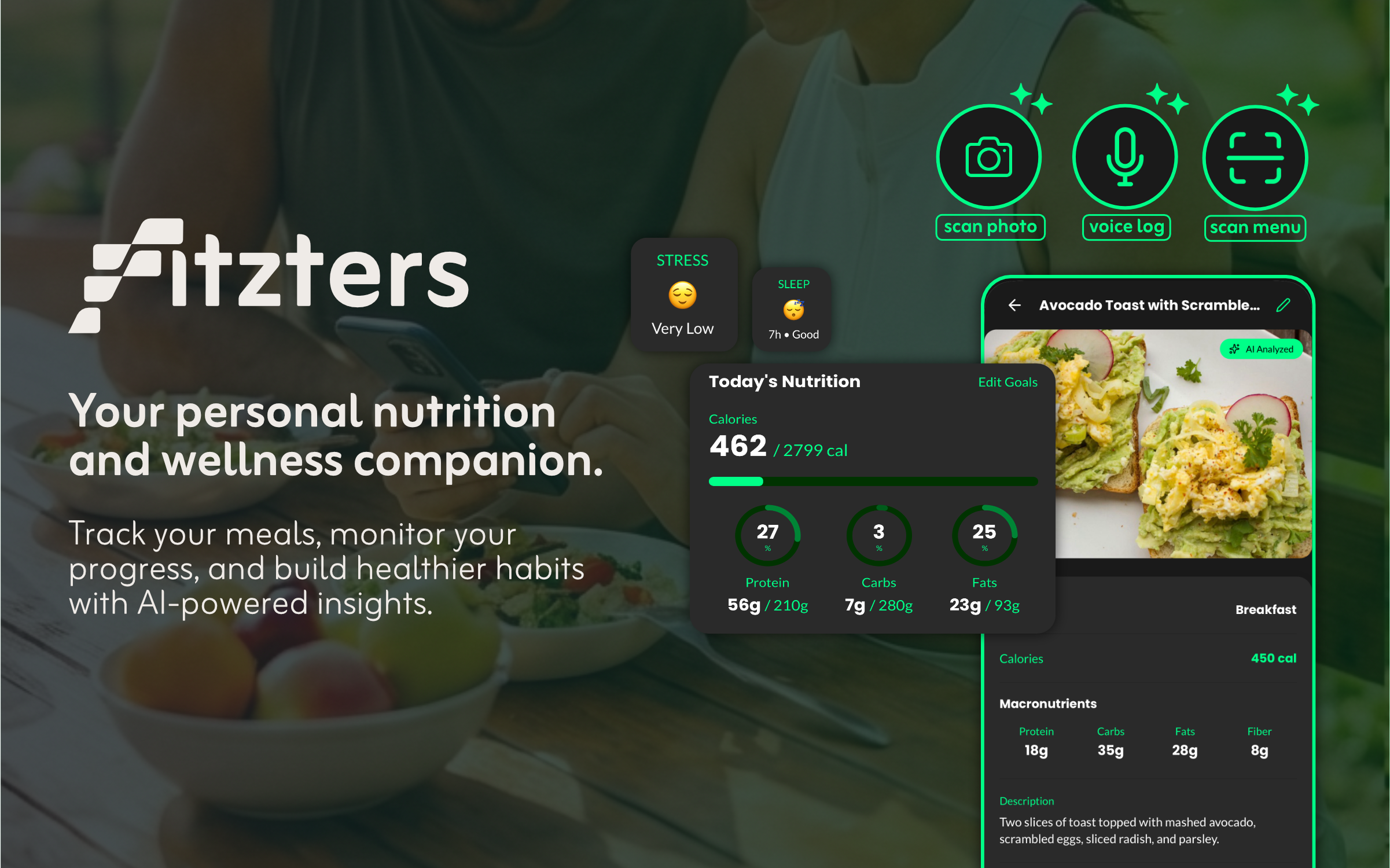

From concept to launch, we built an AI-first nutrition tracker that eliminates manual entry using computer vision and voice recognition.

- Client

- Fitzters

- Year

- Service

- Venture Building, AI Engineering, Mobile Development

Overview

Nutrition tracking has historically been a tedious process involving endless database searches and manual data entry. We saw an opportunity to disrupt this space by incubating Fitzters, a product designed to remove the friction from healthy habits.

As a company builder, Everseed handled the entire lifecycle of Fitzters, from the initial "napkin sketch" to the final deployment on the App Store. Our goal was to prove that AI could turn a complex data-entry task into a magical, one-tap interaction.

The Challenge

The primary reason users abandon fitness apps is "tracking fatigue." Our research showed that if logging a meal takes more than 30 seconds, users eventually stop doing it.

We faced a significant technical and design challenge: How do we build a system that understands food like a human does? We needed to process unstructured inputs (photos of messy plates, voice descriptions like "a bowl of oatmeal with berries") and return structured, accurate nutritional data instantly.

Our Process

1. Ideation & Prototyping

We started by testing the limits of current vision models. We built quick prototypes to validate whether AI could accurately distinguish between similar foods (e.g., a latte vs. a flat white). Once validated, we moved to mapping user journeys that prioritized speed above all else.

2. Design & User Experience

We deliberately avoided the sterile, medical aesthetic common in health apps. Our design team created a "Vibe" system that allows the UI to adapt to the user's mood: Neon Green, Electric Pink, or Sunset Orange. We focused on micro-interactions that make the app feel alive and responsive.

3. Engineering the AI Pipeline

The core of Fitzters is its "Snap & Track" engine. We engineered a pipeline that:

- Captures high-res images directly from the camera.

- Pre-processes images on-device for speed.

- Sends data to our fine-tuned vision models to identify ingredients and estimate volume.

- Returns a complete macro breakdown in under 2 seconds.

Architecture & Tech Stack

We built Fitzters on a modern, scalable stack designed for performance:

- Mobile Framework: React Native (Expo) allowed us to ship to both iOS and Android from a single codebase ensuring feature parity.

- AI Integration: We utilized Gemini for its multi-modal capabilities, combining vision and text analysis to handle complex voice logs and menu scans.

- Backend: A serverless architecture on Google Cloud ensures the app scales automatically during peak meal-time traffic.

Testing & Iteration

Before launch, we ran a beta program with 500 users. We learned that "accuracy" wasn't just about the numbers. It was about trust. We added features like the Menu Analyzer based on user feedback that eating out was a major pain point. We also refined the voice recognition to handle slang and colloquialisms better.

The Impact

Fitzters demonstrates Everseed's ability to identify a user problem and solve it with cutting-edge technology. By abstracting away the complexity of AI, we delivered a product that feels simple, intuitive, and helpful.

"The most accurate AI logging I've seen. Perfect for tracking my campus meals." | Sarah Chen

Visit fitzters.com to see it in action.